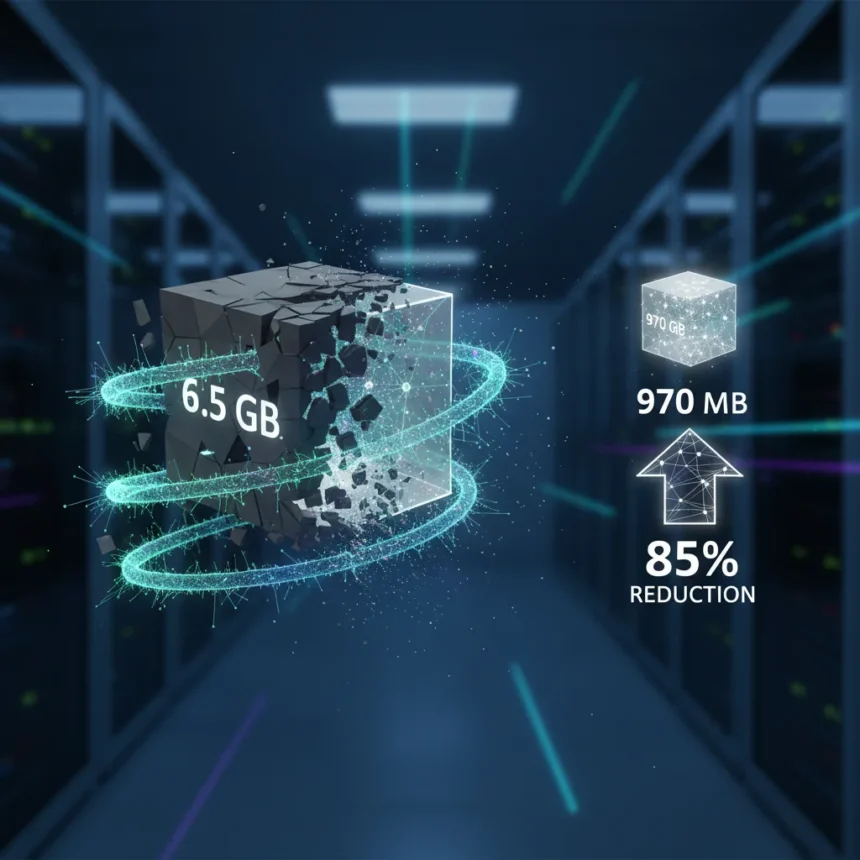

Neural Texture Compression is an Nvidia AI technology that compresses material textures on the fly using a neural network, reducing VRAM usage while maintaining or improving image quality compared to traditional block compression methods. In Nvidia’s GTC demo, a scene’s texture memory drops from 6.5GB uncompressed to 970MB with Neural Texture Compression, achieving an 85% reduction with visual parity to the original. The technology represents a significant shift in how graphics engines can handle texture data as photorealistic rendering demands explode.

Key Takeaways

- Neural Texture Compression reduces texture VRAM by 85% in Nvidia’s demo (6.5GB to 970MB) with visual parity.

- Compresses multiple PBR textures and mipmap chains together using a per-material optimized neural network.

- Requires Cooperative Vectors (Nvidia Blackwell feature) for real-time performance; without it, decompression takes up to 5.77ms per frame.

- RTX NTC SDK v0.9.2 BETA is free on GitHub; includes compression tools, viewer, and GLTF rendering demo.

- Outperforms traditional block compression and advanced image formats like AVIF/JPEG XL at low bitrate.

How Neural Texture Compression Works

Neural Texture Compression operates by training a small, per-material neural network to compress and decompress texture data in real time on the GPU. Rather than using fixed compression algorithms like BC or BTC, the system learns optimal compression patterns for each material’s specific characteristics. This approach allows the technology to preserve visual detail that block compression typically loses.

The Nvidia flight helmet demo illustrates the efficiency gain: uncompressed textures occupy 272MB, block compression reduces this to 98MB, but Neural Texture Compression further shrinks it to just 11.37MB. The Intel T-Rex dinosaur demo shows similar results, with Neural Texture Compression achieving 11.37MB from the original 272MB while visually outperforming block compression. These demos suggest that the technology can unlock 16X more texels (additional detail levels) at the same memory footprint, enabling developers to either reduce VRAM pressure or add substantially more visual fidelity.

The Compute Cost Challenge

The critical bottleneck for Neural Texture Compression is GPU compute demand. Without optimization, decompressing textures in real time requires significant processing power. In Compusemble’s Intel dinosaur demo running at 4K, Neural Texture Compression frame time measured 5.77ms without Cooperative Vectors, making it impractical for real-time gaming. With Cooperative Vectors enabled, that same pass drops to 0.11ms—a 98% reduction. Nvidia’s own 4K DLSS demo shows a 49% frame time improvement with Cooperative Vectors, falling from 1.44ms to 0.74ms.

Cooperative Vectors is a Nvidia Blackwell architecture feature essential for making Neural Texture Compression viable on modern hardware. Older GPU architectures lack this capability, meaning the technology remains out of reach for current-generation RTX 40-series and earlier cards. This hardware requirement effectively gates Neural Texture Compression to future Blackwell-based GPUs like the RTX 50-series, making it a forward-looking feature rather than an immediate solution for existing systems.

Neural Texture Compression vs. Traditional Block Compression

Block compression methods like BC and BTC have dominated GPU texture handling for decades because they are simple and fast. However, they sacrifice quality by quantizing colors and using fixed compression patterns that do not adapt to individual materials. Neural Texture Compression surpasses these methods by learning material-specific compression, preserving detail that block compression discards.

The quality advantage extends beyond traditional alternatives. Neural Texture Compression outperforms advanced image formats like AVIF and JPEG XL at low bitrate, achieving better visual fidelity while maintaining random-access real-time GPU decompression. This makes it uniquely suited to gaming and real-time rendering, where texture access patterns are unpredictable and compression must happen on demand without stalling the GPU pipeline.

Developer Access and Integration

Nvidia released the RTX NTC SDK v0.9.2 BETA as a free download on GitHub. The toolkit includes ntc-cli for compression and decompression, the NTC Explorer viewer for inspecting compressed textures, and the NTC Renderer, a GLTF rendering demo that shows Neural Texture Compression in action. Integration requires Visual Studio and Git with Git-LFS support, targeting developers willing to experiment with the emerging technology.

The SDK includes a D3D12 path optimized for Cooperative Vectors, allowing developers to test the technology on RTX 5090 beta drivers. This early access approach lets game studios and engine developers evaluate Neural Texture Compression before it becomes mainstream, though real-world integration remains limited. The technology has been reported since around 2022 and demonstrated at CES, but widespread adoption depends on Blackwell GPU availability and developer confidence in the approach.

Why This Matters for Game Development

Texture memory is a bottleneck that grows worse every generation. Modern AAA games already push VRAM limits, and photorealistic rendering demands ever-larger texture datasets. Neural Texture Compression offers developers a choice: reduce VRAM consumption to enable lower-end hardware support, or invest the freed memory into additional detail and visual fidelity. For a scene using 6.5GB of textures, cutting that to 970MB unlocks either lower system requirements or room for eight times more texture data at the same memory cost.

The technology also simplifies asset pipelines. Instead of maintaining multiple texture compression formats and quality tiers, developers can compress once with Neural Texture Compression and let the GPU handle decompression on demand. This reduces storage requirements, accelerates asset loading, and simplifies version control for large texture libraries.

Is Neural Texture Compression ready for games?

Neural Texture Compression is still in beta and requires Blackwell GPUs with Cooperative Vectors support. Current-generation RTX 40-series cards cannot use it effectively. However, the technology is mature enough for developer evaluation and experimentation. The free SDK and demo applications show that Nvidia is serious about making it accessible to studios willing to invest in integration work.

How much VRAM does Neural Texture Compression actually save?

In Nvidia’s GTC demo, textures shrink from 6.5GB to 970MB, a reduction of approximately 85%. The Nvidia flight helmet demo shows 272MB compressed to 11.37MB with Neural Texture Compression, compared to 98MB with traditional block compression. Real-world savings depend on material complexity and the neural network’s ability to learn compression patterns, but the demos suggest 8X reduction versus block compression is achievable.

Neural Texture Compression represents a genuine leap forward in addressing VRAM constraints, but it is not a magic solution. Success depends on hardware support, developer adoption, and real-world performance validation across diverse game assets. For studios targeting next-generation hardware and willing to optimize their pipelines, the technology offers substantial breathing room in an era when texture memory demands seem limitless.

Edited by the All Things Geek team.

Source: Tom's Hardware