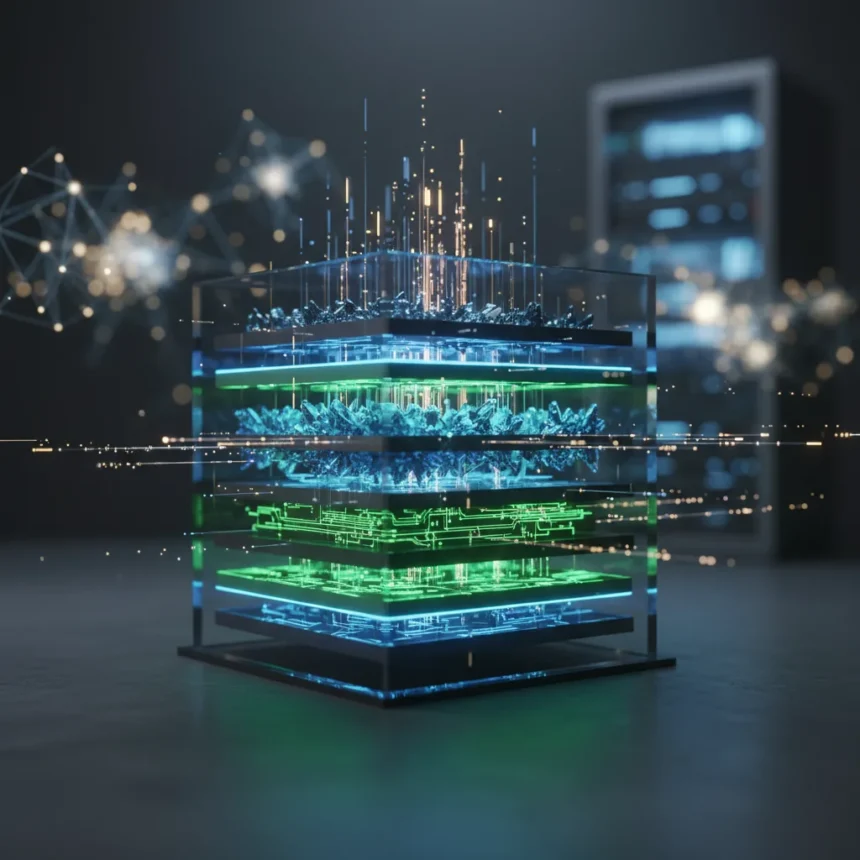

The 3D memory architecture represents a fundamental shift in how systems handle the exploding data demands of artificial intelligence. By reviving principles from older camera sensor technology and fusing NAND flash storage with DRAM capabilities, this hybrid approach tackles one of AI’s most pressing constraints: the memory wall that threatens to make advanced systems prohibitively expensive and power-hungry.

Key Takeaways

- 3D memory architecture combines NAND and DRAM in a single hybrid structure to reduce costs and boost performance.

- The approach resurrects decades-old camera sensor design principles for modern AI workloads.

- Unlimited endurance claims address write-cycle limitations that plague traditional memory solutions.

- AI memory shortages are already driving up smartphone costs and hardware complexity.

- This innovation could reshape how data centers and edge devices handle inference and training.

Why AI’s Memory Crisis Demands a New Approach

Artificial intelligence systems are drowning in data. The sheer volume of parameters, embeddings, and activation states required for modern large language models and vision systems has created what industry observers call a memory wall. Traditional memory hierarchies—separate DRAM for speed and NAND for capacity—force costly trade-offs between latency and storage density. When Micron’s leadership warned that AI is still in its very early innings and will require exponentially more memory, they were signaling a crisis that extends beyond data centers into consumer devices. The memory crunch is already making smartphones more expensive as manufacturers scramble to pack enough RAM and storage to handle local AI inference.

The 3D memory architecture solves this by eliminating the false choice between speed and capacity. Rather than maintaining separate memory tiers, the hybrid structure interleaves NAND and DRAM functionality within a single three-dimensional stack. This reduces latency penalties, cuts manufacturing complexity, and opens pathways to dramatically higher density at lower cost.

How 3D Memory Architecture Works

The 3D memory architecture achieves its breakthrough by borrowing from an unexpected source: image sensor technology. Decades-old camera sensor design principles, particularly the stacked photodiode architecture used in digital imaging, provide the blueprint for vertically integrating memory cells. By applying these proven 3D stacking techniques to memory, engineers can layer NAND flash cells alongside DRAM cells in the same die, creating a unified storage-and-speed system.

The resulting hybrid structure offers three distinct advantages. First, it dramatically increases memory density per unit area—critical when silicon real estate costs money and generates heat. Second, the tight coupling between NAND and DRAM eliminates the bandwidth bottleneck that occurs when data must shuttle between separate chips. Third, the architecture enables what manufacturers claim is unlimited endurance, a claim that directly addresses one of NAND flash’s traditional weaknesses: write cycles degrade performance over time. A memory system that can sustain continuous writes without degradation is transformative for AI training workloads, where gradient updates and parameter refreshes happen billions of times per second.

3D Memory Architecture vs. Traditional Memory Hierarchies

Today’s systems rely on a tiered approach: ultra-fast but expensive DRAM for active computation, and slower but denser NAND for persistent storage. This separation made sense when memory demands were modest. But modern AI flips the economics. A large language model’s weights might occupy 70 gigabytes—far too much for practical DRAM budgets on consumer hardware, yet too performance-critical to live solely on NAND. The 3D memory architecture collapses this hierarchy by making NAND fast enough and DRAM dense enough that a single unified layer handles both roles.

Traditional NAND flash suffers from wear-out: each cell can endure only a finite number of write cycles before reliability degrades. DRAM, by contrast, refreshes constantly but offers no persistence. The hybrid 3D approach claims to combine DRAM’s infinite write endurance with NAND’s density and persistence. If this holds in production, it eliminates a major constraint in AI system design and could enable new architectures that were previously impossible due to memory limitations.

What This Means for AI Hardware and Beyond

The implications ripple across the entire computing stack. Data centers could deploy smaller, cheaper memory subsystems without sacrificing throughput. Edge devices—smartphones, embedded systems, autonomous vehicles—could run more sophisticated AI models locally, reducing latency and dependence on cloud connectivity. Consumer hardware might finally break free from the RAM arms race, where flagship phones now demand 12 or 16 gigabytes just to stay competitive.

The 3D memory architecture also opens doors to new workload patterns. Training pipelines that currently require expensive GPU memory could become feasible on more modest hardware. Inference—the inference phase where trained models process real-world data—could shift from cloud servers back to edge devices, improving privacy and reducing network strain. Systems that combine local reasoning with cloud-scale training become economically viable when memory costs and power consumption drop.

Challenges and Remaining Questions

The technology remains in development, and significant engineering hurdles remain. Manufacturing 3D memory stacks at scale requires precision beyond current high-volume processes. Thermal management becomes critical when you stack NAND and DRAM cells in close proximity—heat from one layer affects the other. Integration with existing silicon ecosystems and software frameworks will demand careful coordination. The unlimited endurance claim, while compelling, requires real-world validation under sustained production loads.

Adoption timelines are also uncertain. Semiconductor fabs must retool to support 3D stacking, a capital-intensive process. Memory manufacturers will need to validate the approach across multiple generations before customers trust it in mission-critical systems. Yet the economic pressure is undeniable: if 3D memory architecture delivers even half its promised benefits, it becomes a competitive necessity.

Is 3D memory architecture the final solution to AI’s memory crisis?

No single technology solves every constraint. 3D memory architecture addresses density, speed, and endurance, but power consumption, thermal design, and cost per gigabyte still require optimization. However, it represents a genuine breakthrough—a fundamentally different approach rather than an incremental improvement. Combined with advances in processor design and algorithmic efficiency, it could be the missing piece that makes AI systems practical at every scale.

When will 3D memory architecture reach consumer devices?

Production timelines depend on manufacturing readiness and customer validation. Early deployments in data center and server applications are likely within 2-3 years, given the high-volume demand and capital budgets in that sector. Consumer devices may follow 12-18 months later, once the technology matures and costs decline further. The exact timeline remains proprietary to the manufacturers developing it.

How does 3D memory architecture compare to other advanced memory technologies?

Competing approaches like HBM (high-bandwidth memory) and emerging technologies like magnetoresistive RAM focus on specific constraints. HBM prioritizes bandwidth; 3D memory architecture prioritizes density and endurance. The technologies are complementary rather than competitive—a system might use 3D memory for bulk storage and HBM for the hottest data paths. The real advantage of 3D memory is its unified approach, eliminating the need to manage multiple memory types.

The 3D memory architecture is not a silver bullet, but it is a genuinely novel solution to a problem that threatens to derail AI’s expansion. By resurrecting camera sensor principles and fusing two seemingly incompatible memory types, engineers have found a path forward that could reshape hardware design for the next decade. The memory wall that has constrained AI progress may finally have a credible answer.

Edited by the All Things Geek team.

Source: TechRadar