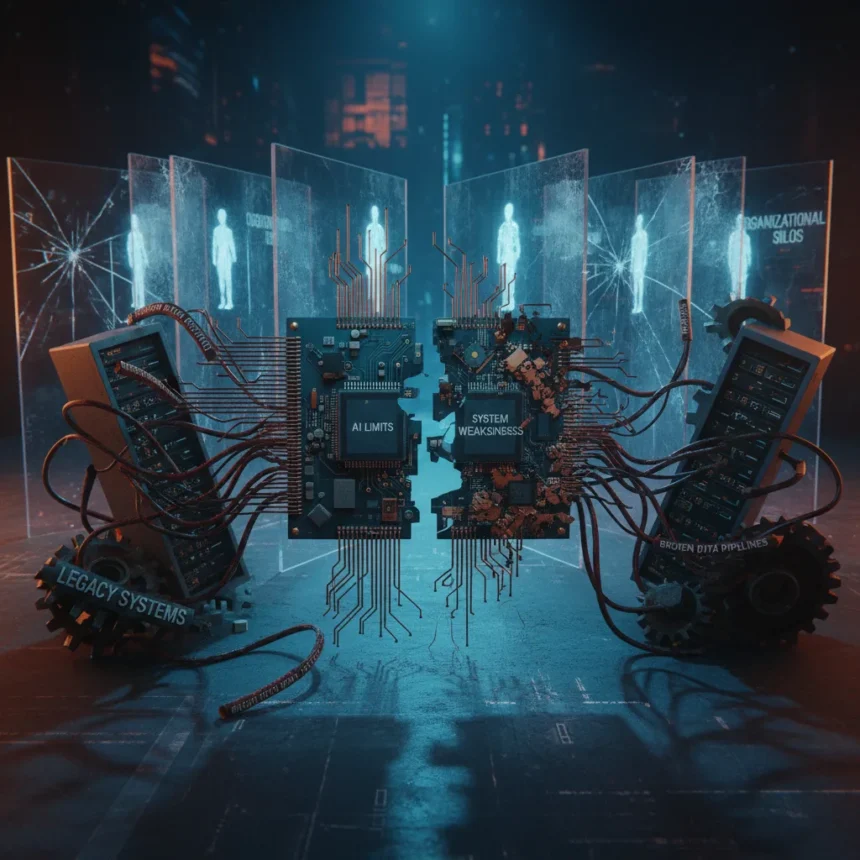

Enterprise AI failures aren’t a sign that artificial intelligence itself is broken—they expose something far more damaging: the crumbling infrastructure and data chaos underlying most organizations. When a company’s AI project collapses, the culprit is almost never the algorithm. It’s the enterprise systems feeding it garbage data, the legacy platforms that can’t talk to each other, and the organizational silos that prevent proper implementation.

Key Takeaways

- Enterprise AI failures reveal data quality and integration problems, not AI capability limitations.

- Most organizations lack the data infrastructure required to support production AI systems.

- Advanced companies don’t fail less—they identify and address failures faster.

- Legacy systems and organizational silos actively sabotage AI deployment success.

- The real investment gap isn’t in AI tools; it’s in foundational data engineering.

The Data Problem Masquerading as an AI Problem

Enterprises don’t have an AI problem—they have a data problem. This distinction matters because it reframes how companies should approach their AI investments. When a machine learning model underperforms in production, the instinct is to blame the model or the algorithm. The actual culprit is usually upstream: incomplete data, inconsistent schemas, missing historical records, or data pipelines that were never designed to feed real-time systems. A sophisticated AI model cannot compensate for garbage inputs, no matter how advanced the training process.

Organizations spend millions licensing enterprise AI platforms while their data remains siloed across incompatible systems, poorly documented, and often contaminated by duplicate or conflicting records. The gap between what these companies are spending and what they’re actually fixing is enormous. They’re treating AI as a software purchase when they should be treating it as a data engineering project. Until the foundation is solid, no amount of algorithmic sophistication will deliver results.

Why Advanced Organizations See Failures Faster

The most advanced organizations aren’t failing less frequently than their peers—they’re simply detecting failures sooner. This is a crucial insight that inverts conventional thinking about AI maturity. A mature AI operation doesn’t mean perfect deployments. It means rapid failure detection, quick rollback capability, and systematic learning from what went wrong. Some enterprises have already had to roll back AI customer service tools after discovering they weren’t ready for production, and they learned this faster because they had monitoring in place.

This creates a paradox: companies that appear to be struggling with AI are often the ones making genuine progress. They’re testing in production, measuring outcomes rigorously, and pulling the plug when something isn’t working. Meanwhile, organizations that claim AI success may simply lack the observability to see what’s actually failing. They’ve deployed models into the wild without proper monitoring, so failures go undetected until customers complain or revenue drops.

Enterprise AI Failures and the System Architecture Problem

The root causes of enterprise AI failures run deeper than data quality alone. Legacy systems that were never designed to interoperate create fundamental bottlenecks. A company running customer data in one database, financial records in another, and operational logs in a third cannot easily feed a unified AI system. Each integration point becomes a translation layer, each translation introduces error, and the cumulative effect is a model trained on corrupted or incomplete information.

Organizational silos compound this technical problem. The data science team doesn’t have visibility into what the infrastructure team is actually capable of supporting. The business stakeholders setting requirements don’t understand the data engineering constraints. The security team imposes access controls that make it impossible to combine datasets needed for model training. These aren’t AI problems—they’re organizational design problems that happen to manifest when you try to deploy AI.

The Investment Mismatch: Spending on Tools, Not Infrastructure

Most enterprise AI spending goes toward software licenses and model training, not toward the foundational infrastructure that would actually enable success. Companies buy expensive AI platforms and hire data scientists, but they don’t invest in the data warehousing, pipeline automation, and governance systems that would make those tools useful. It’s like buying a Formula 1 engine and expecting it to run in a car with a broken transmission.

The real bottleneck isn’t computing power or model sophistication. It’s the ability to move data reliably from source systems into a clean, unified format that AI systems can actually use. This requires investment in data engineering talent, ETL tools, metadata management, and governance frameworks—the unglamorous plumbing that doesn’t show up in executive presentations but determines whether AI projects succeed or fail.

Can enterprise AI failures be prevented?

Enterprise AI failures can be substantially reduced by addressing the underlying data and system integration problems before deploying AI. This means auditing data quality, mapping system dependencies, and building proper data pipelines. It also requires organizational changes: breaking down silos, establishing clear ownership of data quality, and creating feedback loops between AI teams and infrastructure teams. Prevention isn’t glamorous, but it’s far cheaper than failure.

What’s the difference between advanced and struggling AI organizations?

Advanced organizations have better failure detection and faster rollback capabilities. They monitor AI systems in production, measure outcomes against business metrics, and kill projects that aren’t working. Struggling organizations often lack this visibility entirely. They deploy AI systems without proper monitoring and don’t realize they’re failing until the damage is visible to customers.

Why do legacy systems cause enterprise AI failures?

Legacy systems create data silos and incompatibilities that make it impossible to feed clean, unified data to AI models. When customer data lives in one platform, financial data in another, and operational logs in a third, integrating them for AI training becomes a nightmare of manual translation and error introduction. This isn’t an AI problem—it’s a system architecture problem that AI simply exposes.

The uncomfortable truth is that enterprise AI failures reveal organizational weaknesses that have been accumulating for years. Companies can’t blame AI for failing when their data is a mess, their systems don’t talk to each other, and their teams work in isolation. The path forward isn’t buying better AI tools—it’s fixing the broken plumbing underneath.

Edited by the All Things Geek team.

Source: TechRadar