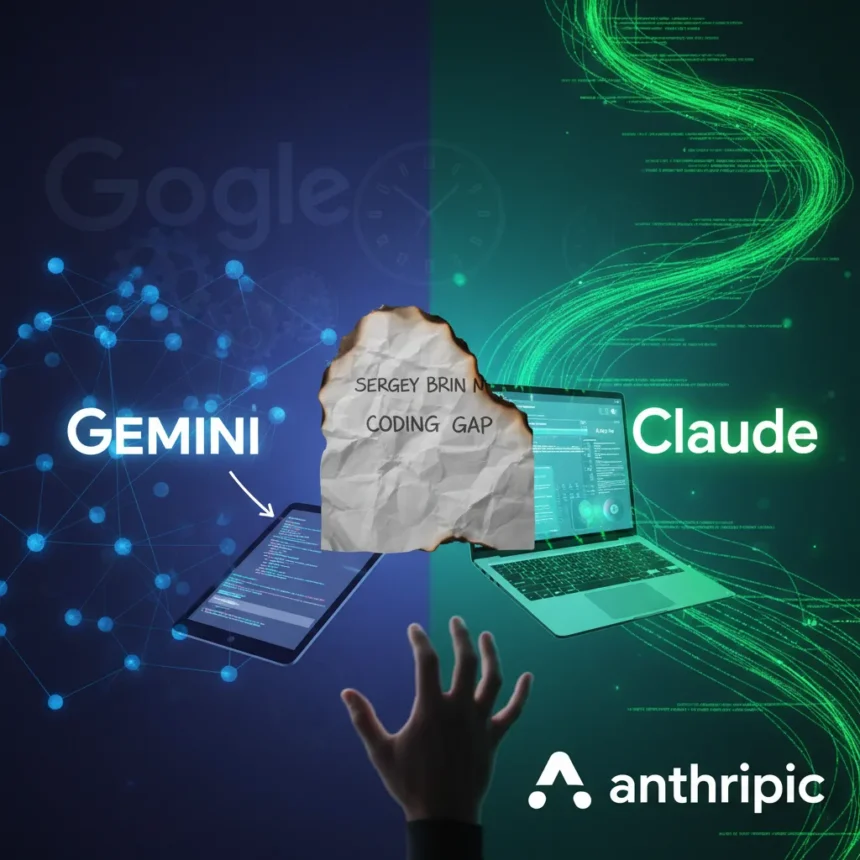

Google co-founder Sergey Brin has admitted in a leaked memo that Gemini lags behind Anthropic’s Claude in AI agent coding capabilities, marking a rare public acknowledgment of competitive weakness in a field where autonomous code execution is becoming central to developer workflows.

Key Takeaways

- Sergey Brin’s leaked memo states Google must urgently bridge the gap in AI agent coding capabilities versus Claude.

- Claude Code, released February 2025, excels in autonomous code analysis, multi-step planning, and long-context codebase awareness.

- Google Antigravity uses Gemini 3 Pro with a 1M token context window, the highest among competitors, but trails in configurable skill rules.

- Claude Code scores 92/100 in technical writing and supports autonomous execution with human approval checkpoints.

- Cursor and OpenAI Codex remain viable alternatives, though neither matches the autonomy of leading agentic platforms.

What the Leaked Memo Reveals About AI Agent Coding Capabilities

The internal Google memo exposes a candid assessment: Gemini’s AI agent coding capabilities are not competitive with Claude Code in the ways that matter most to developers. Brin’s statement that “we must urgently bridge the gap” signals alarm within Google’s leadership about losing ground in a domain where AI agents autonomously write, test, and refactor code at scale. This is not a marginal difference. It reflects architectural choices that have left Google playing catch-up in a market where coding agents are becoming table stakes for enterprise AI.

What makes Claude Code’s advantage significant is not raw processing power—it is execution philosophy. Claude Code operates as a terminal CLI agent with a 200K token context window, supporting models like Claude Opus 4, Sonnet 4, and Haiku 3.5. The platform excels in code analysis, agentic search across million-line codebases, multi-step autonomous planning, and problem-fixing with human checkpoints for review and approval. Developers can delegate an objective, and the agent plans and executes autonomously, then pauses for human sign-off before committing changes.

Google’s Response: Antigravity and the Context Window Gamble

Google’s answer to Claude Code is Antigravity, also referred to as Gemini CLI in some contexts, which uses Gemini Pro 2.5/3 Pro with a 1M token context window—the largest among competing platforms. On paper, this looks like a decisive advantage. A 1M token context means the agent can hold entire codebases in memory, understand architectural patterns across massive refactors, and reason about dependencies without losing context.

The problem is that context size alone does not translate to better coding agents. Antigravity is described as feeling like a “clone” of Claude Code in user experience, including similar thinking animations and interface patterns, but with a critical shortcoming: less configurable skills and rules. Claude allows developers to specify detailed tool restrictions—for example, marking certain tools as read-only for code review scenarios. Antigravity’s minimalist YAML configuration approach gives the agent less guardrail control, forcing developers to rely more on the model’s judgment rather than explicit constraints.

Both platforms launched as free offerings, with Antigravity providing a generous free tier authenticated through personal Google accounts. Claude Code remains free as of February 2025, though Anthropic has not committed to permanent free access.

Where Claude Code and Google Antigravity Differ Most

The gap in AI agent coding capabilities is not about speed or raw intelligence. It is about autonomy architecture and developer control. Claude Code’s strength lies in its ability to handle complex, multi-language projects with high configurability—developers can set rules that constrain the agent’s actions in ways that match their workflow. If you are running a code review, you can tell Claude Code to analyze without writing. If you are prototyping, you can let it loose.

Google Antigravity compensates with sheer context capacity and multi-model support, allowing developers to choose between different Gemini versions for different tasks. For teams working on large refactors or architectural changes, the 1M token window is genuinely useful. But without equivalent configurability, the agent makes more “guesses” about what developers want, rather than operating within explicit boundaries.

Cursor, another competitor, leads in setup speed and Docker deployment workflows, though it does not match either platform’s autonomous planning depth. OpenAI’s Codex remains powerful but lacks the agent-driven autonomy that Claude and Antigravity offer.

Why This Matters Beyond Google’s Internal Politics

Brin’s admission that Gemini trails in AI agent coding capabilities signals a broader shift in how AI vendors compete. The race is no longer about language model size or inference speed. It is about building systems that developers trust to execute code autonomously, with the right balance of power and control. Claude Code has achieved that balance first. Google is playing catch-up, betting that a larger context window and free tier access will eventually overcome architectural disadvantages.

For developers, this competition is healthy. It means both platforms will iterate rapidly to improve autonomy, reduce errors, and add features that make coding agents more useful. But for Google, the leaked memo reveals something uncomfortable: acknowledging a gap is the first step toward closing it, and urgency suggests the gap is wider than public statements have indicated.

Can Google Antigravity Close the Gap?

Google has the resources to improve Antigravity’s configurability and agent reasoning, but architectural changes take time. The 1M token context window is a genuine strength, and the free tier access removes friction for adoption. However, if developers perceive Claude Code as more predictable and controllable, they will stick with it even if Google’s agent is technically capable. Trust in AI agents is built on reliability and control, not just capability.

What should developers prioritize when choosing between Claude Code and Google Antigravity?

If you need fine-grained control over agent behavior and are working on code review or sensitive refactors, Claude Code’s configurability gives it an edge. If you are handling massive codebases or need multi-model flexibility, Google Antigravity’s 1M token context and free tier make it worth testing. Neither platform is universally superior—the choice depends on your workflow and risk tolerance.

Is AI agent coding capability the same as code quality?

No. Agent capability measures how autonomously a system can plan and execute tasks; code quality measures whether the output is correct, maintainable, and secure. Claude Code scores 92/100 in technical writing metrics, but that reflects analysis and documentation quality, not purely code generation. Both platforms still require human review before production deployment.

Will Google’s larger context window eventually make Antigravity the better choice?

Context size is one factor among many. Claude Code’s architectural advantage in configurability and planning may prove more valuable than raw context capacity, depending on how Google evolves Antigravity’s rule engine. The competition will likely narrow as both vendors iterate, but Google’s current gap in AI agent coding capabilities is real and requires more than a larger context window to close.

Sergey Brin’s leaked memo is a watershed moment for Google. It admits, plainly, that Anthropic has moved faster in a critical domain. The question is not whether Google can catch up—it clearly can. The question is whether it will do so before developers build habits and workflows around Claude Code that become sticky. In competitive AI markets, first-mover advantage in developer trust is harder to overcome than technical gaps.

Edited by the All Things Geek team.

Source: TechRadar