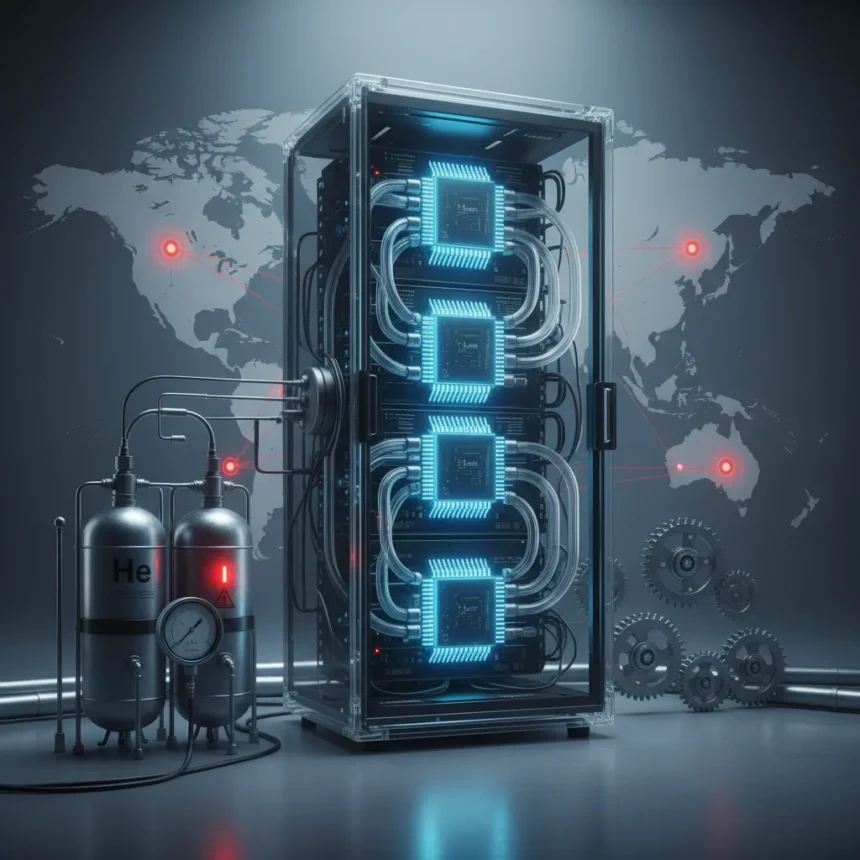

The AI infrastructure helium shortage represents one of the least discussed yet most consequential constraints on global AI expansion. Helium, a non-renewable inert gas essential for cooling semiconductor manufacturing equipment and data center infrastructure, has become the unexpected chokepoint in an industry obsessed with computational power and training efficiency.

Key Takeaways

- Helium is critical for cooling systems in both chip fabrication and AI data centers.

- Global helium supply is finite and concentrated in a handful of extraction locations.

- Data center expansion plans depend on reliable helium availability for cooling systems.

- The shortage directly impacts semiconductor production timelines and AI model training infrastructure.

- Long-term solutions require investment in helium recovery and alternative cooling technologies.

Why Helium Matters to AI Infrastructure

Helium’s role in AI infrastructure extends far beyond novelty applications. The gas serves as a critical coolant in cryogenic systems that maintain optimal operating temperatures for high-density chip arrays and advanced semiconductor manufacturing equipment. Without helium, data centers cannot achieve the thermal efficiency required to run large language models and training clusters at scale. Most major AI companies operating hyperscale data centers depend on helium-based cooling systems to prevent thermal degradation and maintain chip longevity.

The physics is straightforward: helium has the lowest boiling point of any element at atmospheric pressure, making it irreplaceable for certain cooling applications that other gases cannot match. When cooling systems fail or operate inefficiently due to helium scarcity, entire data center sections go offline or operate at reduced capacity. This translates directly into delayed model training, reduced inference capacity, and higher operational costs for AI providers worldwide.

The Global Helium Supply Problem

Helium extraction is geographically concentrated and fundamentally constrained by geology. The gas occurs naturally in underground reserves, primarily in North America, Eastern Europe, and parts of Africa, but extraction requires specialized infrastructure and expertise. Unlike renewable energy sources, helium cannot be manufactured or synthesized at scale—it must be extracted from existing reserves, and those reserves are finite.

The current global helium supply chain operates under significant strain. Major extraction facilities face aging infrastructure, geopolitical complications in certain regions, and limited investment in new extraction capacity. When supply tightens, prices spike, and data center operators must either accept higher cooling costs or defer expansion projects. This creates a cascading effect: delayed infrastructure buildout slows AI model development, reduces competition among AI providers, and ultimately concentrates computational resources among companies wealthy enough to absorb helium cost increases.

Implications for AI Development Timelines

The AI infrastructure helium shortage directly impacts the speed at which new models can be trained and deployed. Large language model training requires sustained computational intensity over weeks or months, and cooling system failures during training runs waste enormous amounts of time and electricity. Companies planning new data centers must now factor helium availability into site selection and buildout schedules, adding complexity to infrastructure planning that previously focused mainly on power availability and network connectivity.

Smaller AI companies and research institutions face disproportionate pressure. While major cloud providers can negotiate long-term helium supply contracts and absorb price volatility, smaller players lack bargaining power and may find their expansion plans stalled by supply constraints. This dynamic reinforces market consolidation in the AI industry, where scale and capital reserves become prerequisites for accessing critical infrastructure resources.

What Solutions Exist?

The most immediate response involves improving helium recovery and recycling in existing systems. Data centers and semiconductor manufacturers can capture helium that would otherwise be vented, reducing net consumption. However, recycling infrastructure requires upfront investment and operational discipline, and adoption remains uneven across the industry.

Longer-term solutions include developing alternative cooling technologies that reduce helium dependence. Liquid nitrogen and other cryogenic fluids offer partial substitutes for certain applications, and emerging cooling architectures using phase-change materials or advanced heat exchangers could reduce helium requirements. However, transitioning away from helium-based cooling takes years of research and requires retrofitting existing infrastructure—a costly and time-consuming process that most operators cannot undertake immediately.

Investment in new helium extraction capacity represents another path forward, but geological constraints and high capital requirements limit expansion. Helium exploration is expensive and risky, with no guarantee of finding economically viable reserves. Without government support or long-term supply contracts, private companies have little incentive to fund new extraction projects.

Does the AI industry acknowledge this constraint?

Public discussion of the AI infrastructure helium shortage remains minimal. Major AI companies rarely highlight supply chain vulnerabilities that might concern investors or customers. The focus remains on computational breakthroughs, model capabilities, and scaling efficiency—not on the unglamorous infrastructure dependencies that enable those advances. This silence creates a false impression that AI expansion faces no material constraints beyond capital and talent.

How does helium scarcity compare to other infrastructure bottlenecks?

The AI infrastructure helium shortage is distinct from other supply chain challenges like semiconductor wafer capacity or GPU availability. Helium scarcity is permanent and geological—extraction cannot be increased indefinitely. Semiconductor production can theoretically scale with new fabs and investment. Helium supply is fundamentally limited by Earth’s natural reserves and extraction economics. This makes helium a harder constraint to overcome through conventional market mechanisms.

The AI revolution depends on solving problems that seem solvable through engineering and capital. The helium constraint reveals an uncomfortable truth: some bottlenecks are geological rather than technological, and no amount of computational cleverness can manufacture a finite natural resource. Until the industry invests seriously in helium recovery, alternative cooling systems, and new extraction capacity, this overlooked crisis will continue limiting AI infrastructure expansion worldwide.

This article was written with AI assistance and editorially reviewed.

Source: TechRadar