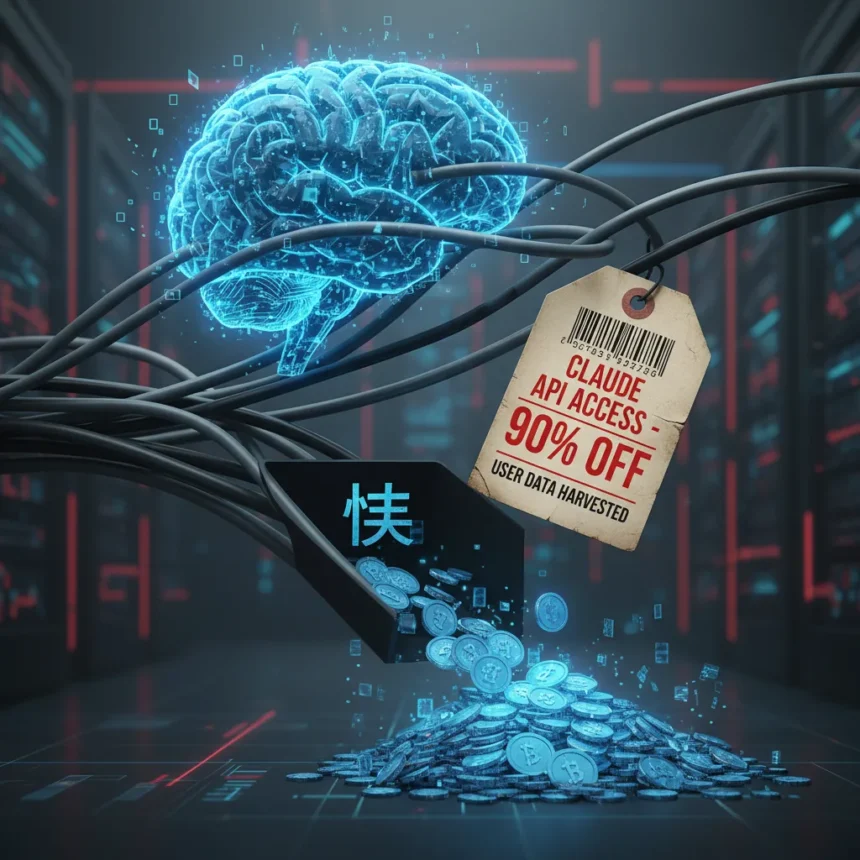

The Claude API grey market operates as a shadow economy in China, where proxy networks sell Anthropic’s API access at roughly 10% of official rates while systematically harvesting user prompts and outputs for resale as AI training material. This two-tier extraction—discounted access in exchange for data—has created a lucrative black market that Anthropic has struggled to contain despite successive access restrictions implemented in September 2025 and April 2026.

Key Takeaways

- Chinese proxy services resell Claude API access at approximately 90% discount compared to official Anthropic pricing

- User prompts and model outputs are harvested and resold as training data to competing AI labs

- Anthropic alleged in February 2026 that DeepSeek, Moonshot AI, and MiniMax used 24,000 fraudulent accounts to generate 16+ million exchanges with Claude

- Proxy operators route traffic through geofencing bypass infrastructure and distribute requests across thousands of compromised accounts

- Every access restriction Anthropic implements spawns corresponding circumvention infrastructure, creating a persistent cat-and-mouse cycle

How the Claude API grey market operates

Chinese developers, researchers, and hobbyists bypass Anthropic’s regional restrictions by purchasing access through “transfer stations” and “relay stations”—proxy operators that route API requests through geofencing bypass infrastructure. Instead of paying official rates, users contact proxy operators and receive discounted access in exchange for surrendering their data. The proxy system works by distributing user requests across thousands of fraudulent or compromised accounts, masking traffic origin through third-party routers and spreading load across different cloud providers and API keys to evade detection.

The financial incentive is stark. Official Anthropic API pricing serves as the baseline; proxy services charge approximately 10% of that rate, creating a 90% discount that makes advanced AI access affordable for price-sensitive developers in regions where Anthropic restricts service. This pricing gap has proven larger than Anthropic’s enforcement capacity. Users trade their prompts and model outputs—the actual conversation data flowing through the API—for this dramatic cost reduction. The proxy infrastructure captures these exchanges automatically, harvesting them as training material.

Anthropic documented the scale of this abuse in February 2026, alleging that three major Chinese AI laboratories—DeepSeek, Moonshot AI, and MiniMax—operated coordinated distillation campaigns generating over 16 million exchanges with Claude through approximately 24,000 fraudulent accounts. These were not isolated misuse cases but industrial-scale operations designed to extract Claude’s capabilities and transfer them into competing models. The labs maintained what researchers call “hydra clusters,” operating tens of thousands of accounts simultaneously across different API keys and cloud providers to spread requests and avoid triggering Anthropic’s abuse detection systems.

Data harvesting and secondary markets

The grey market explicitly monetizes user data as a commodity. When Chinese developers use proxy services, they are not simply buying discounted API access—they are unknowingly contributing their proprietary prompts, code samples, and model outputs to a secondary training data market. Proxy operators extract this data and resell it to AI labs building competing models, creating a supply chain where Western AI capabilities are distilled into Chinese alternatives through harvested user conversations.

This data extraction carries concrete privacy risks. According to security researchers, leaked user data from proxy services may be weaponized for marketing, fraud, or extortion. Developers who believe they are using a legitimate discount service may discover months later that their proprietary code, research prompts, or business logic has been extracted and resold. Some Chinese developers have begun building their own Claude Code API proxies and open-sourcing operational guidelines specifically to avoid these privacy risks, attempting to create a “cleaner” proxy alternative.

The asymmetry is notable: users receive 90% savings while their data is harvested and resold, but the true value extraction flows in one direction. Anthropic loses API revenue and IP protection; users lose data privacy; Chinese AI labs gain training material at minimal cost. OpenAI has documented similar tactics, alleging in February 2026 that DeepSeek systematically “stole” its intellectual property through large-scale distillation using third-party routers and masking techniques to bypass geographic restrictions and harvest ChatGPT outputs.

Anthropic’s enforcement cycle and persistent workarounds

Anthropic has implemented successive access restrictions—September 2025 prohibited access by entities more than 50% owned by companies headquartered in unsupported regions, including China, regardless of operational location; April 2026 introduced additional restrictions. Yet each enforcement measure spawns corresponding circumvention infrastructure. Proxy operators simply route requests through new jurisdictions, use additional layers of account obfuscation, or distribute load across more API keys and cloud providers.

This creates a structural problem: the cost of operating a proxy network remains far below the value of discounted API access, so operators have strong financial incentive to innovate around every restriction Anthropic deploys. As long as the official pricing gap persists and Chinese developers lack legitimate regional access, the proxy market will remain profitable. Anthropic’s security team is essentially playing defense against an opponent with unlimited economic motivation to breach each new wall.

The vulnerability extends beyond Anthropic. Google Gemini and Microsoft GitHub Copilot are targeted by the same prompt injection security vulnerabilities that Claude Code agents face, according to security researchers. These vulnerabilities can be weaponized to steal API credentials and access tokens. Yet vendor bug bounty payments remain minimal—Anthropic paid $100 for security vulnerability disclosure, GitHub paid $500, and Google’s payment was undisclosed—suggesting limited institutional investment in addressing the root causes.

What this means for users and the broader AI market

The Claude API grey market reveals a structural tension in the global AI economy. Western AI labs restrict geographic access for compliance, security, or geopolitical reasons, but these restrictions create price gaps that black markets exploit. Chinese developers face a choice: pay full price through workarounds, use proxy services and lose data privacy, or build their own infrastructure. The proxy market thrives because it offers a third option that appears cheaper than all alternatives, even though the true cost is data extraction.

For Anthropic, the grey market represents both a revenue loss and an IP protection failure. Distillation campaigns like those operated by DeepSeek, Moonshot, and MiniMax are explicitly designed to transfer Claude’s capabilities into competing models. The scale—24,000 accounts, 16+ million exchanges—suggests that these operations are not marginal; they are material to how Chinese AI labs are building their own models. Anthropic’s public accusation in February 2026 marks an escalation in U.S.-China AI competition narratives, occurring months after Anthropic settled a $1.5 billion copyright claim with authors, suggesting the company is taking IP protection more seriously.

Is the Claude API grey market legal?

The proxy services violate Anthropic’s terms of service and regional access restrictions, which are contractually binding. Using fraudulent accounts, harvesting user data without consent, and reselling that data as training material constitute terms of service violations and potentially data privacy violations under various jurisdictions’ laws. However, enforcement across borders remains difficult, particularly when proxy operators are located in China and Anthropic’s legal remedies are limited to account suspension and IP litigation.

Can users protect themselves from data harvesting through proxies?

Users who purchase Claude API access through proxy services should assume their prompts and outputs will be harvested and resold. The only reliable protection is to avoid proxy services entirely and use officially supported access channels. For developers in restricted regions without official access, building private proxy infrastructure or using alternative models may reduce exposure to data harvesting, though these options require technical expertise and do not eliminate the underlying risk.

Why doesn’t Anthropic just lower prices to compete with the grey market?

Anthropic’s pricing reflects its cost structure, safety investments, and business model; it is unlikely to match 90% discounts without fundamentally restructuring its revenue. Additionally, lowering prices globally might not address the core issue—proxy operators profit by harvesting data, not just by offering discounts. A lower official price would simply compress the proxy market’s margin without eliminating the data extraction incentive.

The Claude API grey market exposes a fundamental vulnerability in how Western AI companies enforce geographic access controls and protect intellectual property. As long as pricing gaps exist and enforcement mechanisms remain asymmetrical, proxy networks will continue to extract value from both users and AI labs. Anthropic’s successive restrictions have failed to contain the market because they address symptoms—fraudulent accounts, proxy traffic—rather than the underlying economic incentive. The real risk lies not in geopolitics alone, but in how this shadow supply chain draws ordinary developers into data extraction schemes while simultaneously feeding Chinese AI labs with Western training material at minimal cost.

Edited by the All Things Geek team.

Source: Tom's Hardware