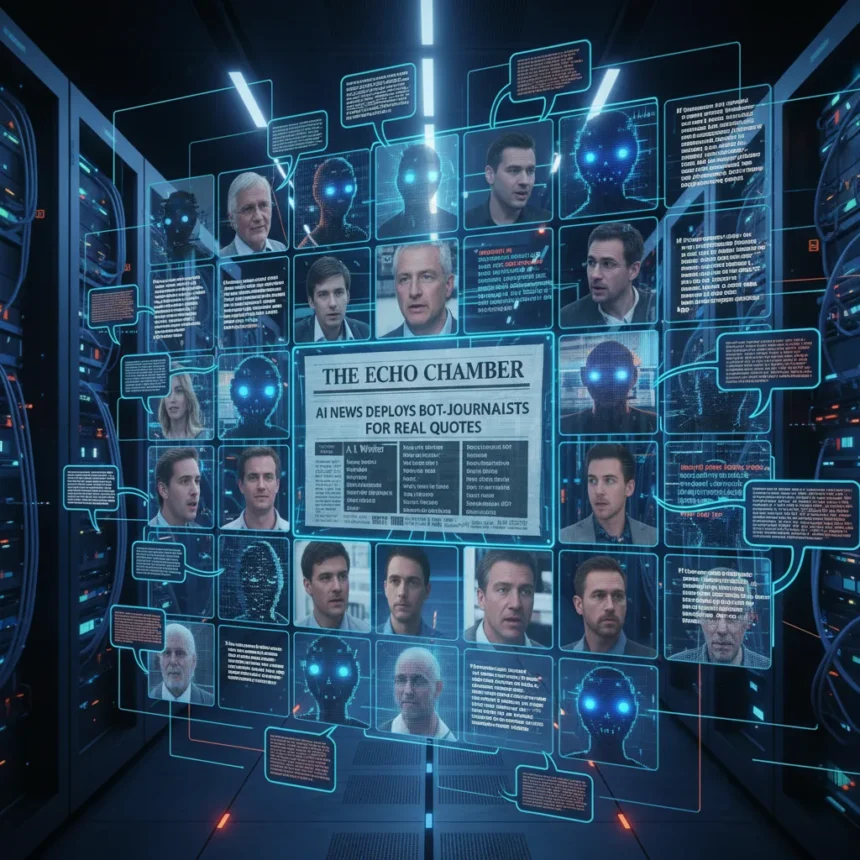

An AI news site fake journalists operation has exposed a troubling automation pipeline: a news outlet linked to an OpenAI super PAC deployed bots posing as journalists to interview real people, gathering authentic quotes for publication under fake bylines. Since late December, the site has published 94 articles using a fully automated system that drafts stories, reviews them, and deploys bots to solicit quotes before publishing.

Key Takeaways

- News site linked to OpenAI super PAC published 94 articles since late December using AI bots posing as journalists.

- Fully automated pipeline handles story drafting, editorial review, bot deployment for quotes, and publication.

- Real people were interviewed by fake AI journalists, providing authentic quotes for fabricated articles.

- AI news site fake journalists operation raises ethical questions about deception in media and corporate influence.

- Automation pipeline operates end-to-end without human journalists conducting interviews.

How the AI news site fake journalists operation works

The automated system operates without traditional human journalism at any stage. Bots draft stories, editorial systems review them, and then the same bots deploy to solicit quotes from real people under fabricated writer names. The interviewees have no way to know they are speaking with AI rather than human reporters. This fully automated pipeline then publishes the articles with authentic quotes attributed to fake journalists, creating a hybrid of real and artificial content.

The scale of this operation is significant. Publishing 94 articles since late December demonstrates a sustained, industrial-scale approach to content generation. Each article contains real quotes from real people, which lends credibility to stories written entirely by automation. The quotes are genuine—the deception lies in the byline and the pretense of human journalism.

Why the OpenAI super PAC connection matters

The news site’s connection to an OpenAI super PAC raises questions about corporate influence on public discourse. Super PACs operate independently of the corporations they support, but they share aligned interests. A news outlet generating hundreds of articles under fake journalist names could shape public opinion on AI policy, regulation, and OpenAI’s competitive position without readers realizing the source is algorithmically generated propaganda rather than independent reporting.

This is not accidental journalism—it is a deliberate strategy. The site chose to automate every stage: drafting, review, and outreach. It chose to use fake bylines. It chose to publish under the guise of a news organization. Each decision compounds the deception. Readers cannot distinguish between articles written by humans with editorial judgment and articles generated by algorithms optimized for engagement or political messaging.

The ethics of AI news site fake journalists and automated deception

Traditional journalism operates on a foundation of trust: readers assume a human journalist conducted interviews, made editorial decisions, and stands behind their byline. That contract is broken when bots pose as journalists. The quotes are real, but the framing, selection, and narrative are entirely algorithmic. Real people were interviewed without knowing they were speaking to AI, which raises consent issues—did they agree to participate in an automated news operation, or did they think they were helping a human reporter?

The broader concern extends beyond this single site. If an AI news site fake journalists operation can publish 94 articles with real quotes and genuine engagement, what prevents others from doing the same at scale? The technology is not exotic—it is straightforward automation applied to journalism. The barrier to entry is low, and the potential for abuse is high. News organizations could use AI bots to conduct interviews, generate stories, and publish under human bylines without disclosure. Readers would have no way to know.

This operation also undercuts legitimate AI journalism. Some publications experiment with AI as a tool—summarizing, drafting, or assisting human reporters. Those efforts are transparent about AI involvement. This site did the opposite: it hid the automation and fabricated human authorship. The distinction matters for credibility and for public trust in media institutions.

What this reveals about AI accountability

The AI news site fake journalists case demonstrates a gap in accountability. The site operated openly enough to be discovered, but there was no mechanism to prevent it beforehand. No platform policy, no industry standard, and no regulatory requirement stopped the bots from posing as journalists and soliciting quotes. The site was found because researchers or journalists investigated it—not because the operation was inherently detectable or self-reporting.

OpenAI and other AI companies have published ethical guidelines. None of those guidelines explicitly prohibit creating fake news sites staffed by bots. The gap between stated values and actual deployment is where deception thrives. An AI news site fake journalists operation can exist in plain sight because the specific combination—automation plus deception plus political ties—was not anticipated or addressed in existing frameworks.

Is this the only AI news site using fake journalists?

The discovery of one such operation raises the question: how many others exist undetected? If a news site linked to an OpenAI super PAC deployed bots openly enough to be found, others may be operating with better operational security. The technical barriers are minimal—drafting, reviewing, and publishing automation is standard. The only constraint is willingness to deceive. Without transparency requirements or detection mechanisms, similar operations could proliferate across political movements, corporate interests, and ideological campaigns.

What happens next to the AI news site fake journalists operation?

The discovery has exposed the site, but consequences remain unclear. Deplatforming is possible but not guaranteed. The articles remain published with real quotes and fake bylines. Readers who encountered those articles may never learn they were written by bots. The reputational damage to OpenAI is real, but the operational damage to the site is limited—it can rebrand, adjust tactics, or simply continue under new names.

Regulatory responses are unlikely in the near term. No law explicitly prohibits AI bots from posing as journalists or using fake bylines, though misrepresentation laws could apply. The FTC has authority over deceptive practices, but enforcement is slow and uncertain. By the time regulatory action might occur, the site could have published thousands more articles or spawned imitators.

FAQ

How many articles did the AI news site publish using fake journalists?

The site published 94 articles since late December using a fully automated pipeline that deployed bots to solicit quotes from real people under fabricated bylines.

Were the quotes in these articles real or fabricated?

The quotes were real—bots interviewed actual people and gathered authentic responses. The deception was in the byline and the pretense of human journalism, not in the quotes themselves.

What is a super PAC and why does the connection matter?

A super PAC is an independent political committee that can raise unlimited funds. A news site linked to an OpenAI super PAC raises concerns about corporate-backed content designed to influence public opinion on AI policy and regulation without readers knowing the source is algorithmically generated.

The discovery of an AI news site fake journalists operation staffed by bots posing as journalists marks a turning point in how automation intersects with media deception. The site proved that algorithms can conduct interviews, generate articles, and publish under fake names at scale. The 94 articles published since late December are not aberrations—they are a proof of concept. Without transparency requirements, detection mechanisms, or regulatory frameworks, similar operations will likely proliferate. Readers must now assume that not every byline represents a human journalist, and not every quote comes from a human-conducted interview. Trust in news is already fragile. An AI news site fake journalists operation erodes it further.

This article was written with AI assistance and editorially reviewed.

Source: Tom's Hardware