Google’s Pentagon AI deal represents a dramatic shift in the company’s relationship with military applications of artificial intelligence. On April 28, 2026, Google amended its existing Department of Defense contract to grant Gemini access to classified networks for ‘any lawful government purpose,’ a phrase that echoes the contentious language Pentagon officials have pushed across the AI industry.

Key Takeaways

- Google expanded its Pentagon contract on April 28, 2026, allowing Gemini AI access to classified government data.

- Over 600 Google employees signed a letter opposing the deal, citing misuse risks.

- The contract permits ‘any lawful government purpose’ but grants Google no veto power over Pentagon decisions.

- Anthropic rejected similar terms in February 2026, calling them too broad; OpenAI accepted them in March 2026.

- Google withdrew from a $100 million drone swarm program in February 2026 after internal ethics review.

What the Google Pentagon AI deal actually permits

The amended contract allows the Pentagon to use Gemini for any lawful government purpose, including operations on classified networks. Google must ‘assist in adjusting its AI safety settings and filters at the government’s request,’ according to contract language. This means the Pentagon can override Google’s built-in safeguards without the company’s approval. The contract includes language stating AI should not be used for domestic mass surveillance or autonomous weapons ‘without appropriate human oversight,’ but legal experts note this phrasing is non-binding—the Pentagon retains ‘no right to control or veto lawful government operational decision-making’ by Google.

The distinction matters enormously. A commitment not to use AI for surveillance ‘without oversight’ is not the same as a ban on surveillance. It is a commitment to have someone in the room when it happens. Google’s statement emphasized this framing: ‘We are proud to be part of a broad consortium of leading AI labs and technology and cloud companies providing AI services and infrastructure in support of national security. We remain committed to the private and public sector consensus that AI should not be used for domestic mass surveillance or autonomous weaponry without appropriate human oversight’. Notice what is absent—any binding restriction on what the Pentagon actually does.

Why 600 Google employees said no

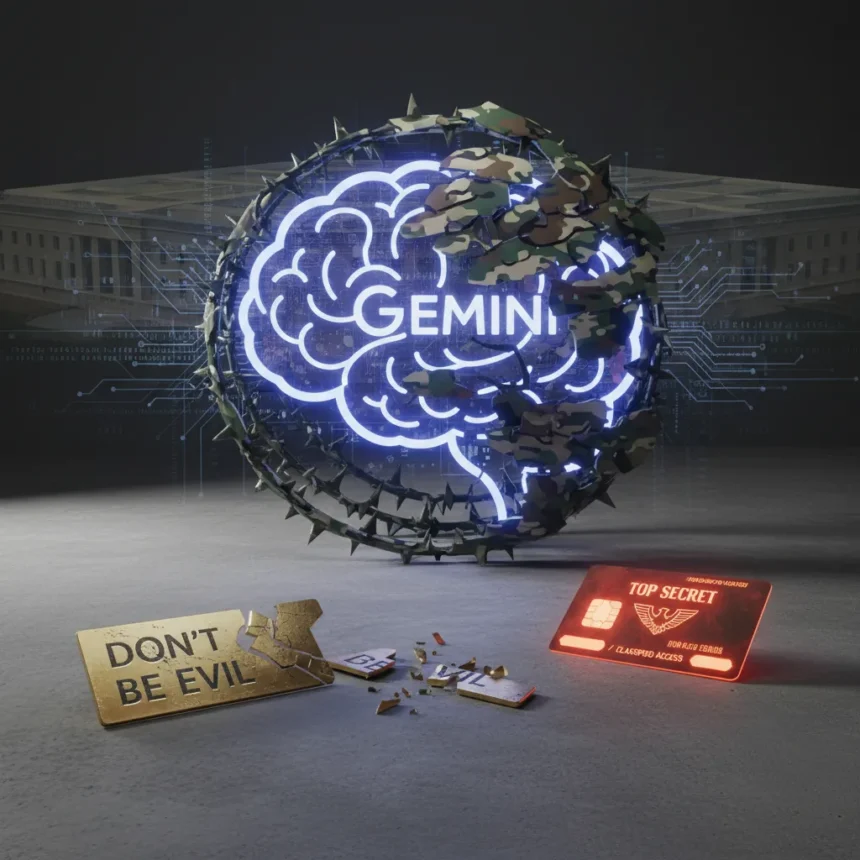

Internal opposition to the deal was swift and substantial. Over 600 Google employees signed a letter to CEO Sundar Pichai urging rejection, arguing that classified work risks AI misuse and that ‘refusing classified work is the only way to ensure Google’s AI isn’t misused’. This echoes the 2018 Project Maven revolt, when thousands of Google employees protested the company’s involvement in a Pentagon drone program. Back then, Google had a motto: ‘Don’t Be Evil.’ The company removed it from its code of conduct in 2015, but the principle still resonated internally.

The timing is telling. In March 2026, over 900 Google and 100+ OpenAI employees signed a public pledge supporting Anthropic’s refusal to accept similar Pentagon terms. Anthropic, a Google-backed competitor, rejected ‘any lawful purpose’ language in February 2026, insisting on explicit restrictions against autonomous weapons and domestic mass surveillance. The Pentagon labeled Anthropic’s position a supply chain risk—a characterization a federal judge called ‘Orwellian’ in ongoing litigation. Google, by contrast, capitulated.

How Google’s deal compares to competitors

Google is not alone in striking Pentagon AI agreements, but the terms vary significantly. OpenAI signed its own Pentagon deal in March 2026, retaining ‘full discretion’ over its safety stack after backlash from employees, a compromise that still grants broad classified access. Elon Musk’s xAI has been granted broad classified AI access without the same public scrutiny. But Anthropic’s refusal to accept ‘any lawful purpose’ language stands as a sharp contrast. Anthropic’s position is that explicit restrictions on autonomous weapons and domestic surveillance are non-negotiable, even if it costs the company Pentagon contracts. The Pentagon’s response—treating Anthropic as a supply chain risk—reveals the real leverage: whoever refuses to play by Pentagon rules gets frozen out.

Google’s expanded deal also marks a reversal from February 2026, when the company withdrew from a $100 million Pentagon drone swarm program after internal ethics review. That decision suggested Google was willing to walk away from lucrative contracts over ethical concerns. The April amendment suggests otherwise. The company is willing to expand AI access to classified networks as long as it does not have to explicitly build autonomous weapons itself.

The non-binding safeguards problem

Contract language banning surveillance and weapons ‘without appropriate human oversight’ sounds reassuring until you examine what it actually restricts. It does not ban surveillance; it requires a human to approve it. It does not ban autonomous weapons; it requires human involvement in the decision to deploy them. Legal experts quoted in industry analyses note the language is essentially unenforceable—the Pentagon decides what ‘appropriate human oversight’ means, and Google has no recourse.

This is the crux of the employee opposition. Google cannot control how the Pentagon uses Gemini once it has access. The company can set safety filters, but the Pentagon can ask Google to adjust them. Google can refuse, but then it loses the contract. The practical leverage belongs entirely to the Pentagon. A commitment to avoid misuse means nothing if the other party defines misuse as whatever serves national security.

Why this matters right now

The Google Pentagon AI deal arrives at a moment when the entire AI industry is negotiating its relationship with defense. Anthropic’s refusal to bend on safety terms is being treated as a liability rather than a principle. OpenAI’s compromise—retaining safety discretion while granting classified access—has become the industry standard. Google’s expansion to classified networks signals that the race for Pentagon dollars is overriding internal ethics objections. Over 600 employees said no; Google’s leadership said yes anyway.

The irony is sharp. Google spent years cultivating an image as the ethical AI company, the one that would not build weapons, the one that had principles. The ‘Don’t Be Evil’ motto, even after removal from the code of conduct, still defined how many people understood Google’s mission. That image is now actively contradicted by a contract that permits classified AI access for ‘any lawful purpose’ with no binding restrictions on how it is used. The Pentagon will decide what is lawful. Google will adjust its safety settings on request. The employees who objected will watch from the sidelines.

Is Google’s Pentagon deal legally binding on safety restrictions?

No. The contract’s language restricting domestic mass surveillance and autonomous weapons ‘without appropriate human oversight’ is non-binding because it does not define who determines appropriateness or grant Google veto power. The Pentagon retains full discretion over operational decisions, and Google has no contractual right to refuse.

Did Google withdraw from other Pentagon programs?

Yes. In February 2026, Google exited a $100 million Pentagon drone swarm program following internal ethics review. The company’s expansion to classified Gemini access just two months later suggests the withdrawal was about the specific program, not about Pentagon work generally.

Why did Anthropic reject similar Pentagon terms?

Anthropic refused ‘any lawful purpose’ language in February 2026, insisting on explicit restrictions against autonomous weapons and domestic surveillance. The Pentagon responded by labeling Anthropic a supply chain risk, and litigation is ongoing. Anthropic’s stance prioritizes ethical constraints over Pentagon contracts; Google chose the opposite path.

Google’s Pentagon AI deal marks the moment when the company’s stated commitment to responsible AI collided with the reality of defense contracting and chose the contract. Over 600 employees objected. Anthropic refused similar terms. OpenAI compromised. Google expanded. The question now is not whether the company will misuse Gemini—it is whether the Pentagon will, and whether Google will have any meaningful way to object when it does.

This article was written with AI assistance and editorially reviewed.

Source: TechRadar