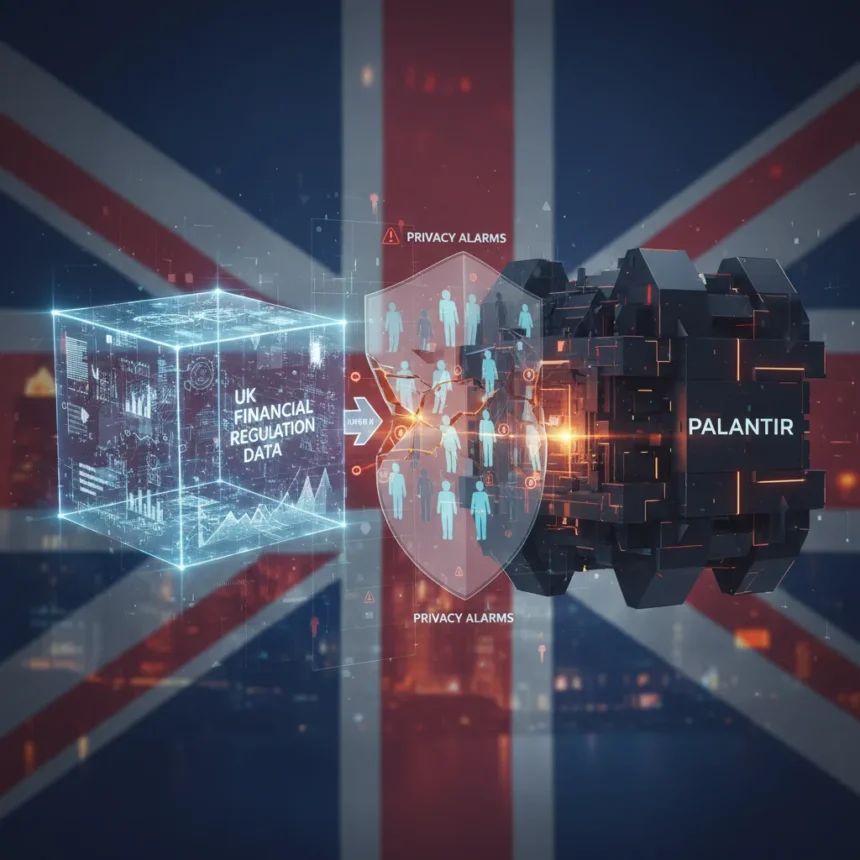

The UK Financial Conduct Authority has granted Palantir access to highly sensitive financial regulation data as part of a three-month trial to detect fraud and money laundering, a move that has triggered significant privacy concerns about entrusting critical UK financial oversight to a US-based AI company.

Key Takeaways

- The FCA is providing Palantir with access to a data lake containing sensitive UK financial regulation information.

- This three-month trial tests Palantir’s AI platform on financial crime intelligence including fraud and money laundering detection.

- Privacy advocates and officials have raised “very significant privacy concerns” about the arrangement.

- Palantir will process large volumes of data to determine if it is a suitable long-term partner for the FCA.

- The deal represents a major expansion of US AI involvement in UK financial sector oversight.

What the FCA-Palantir Data Sharing Arrangement Means

Financial regulation data privacy sits at the intersection of national security, consumer protection, and institutional trust. The FCA’s decision to hand over its data lake—a massive repository of UK financial intelligence—to Palantir marks a significant shift in how Britain’s financial watchdog tackles financial crime. Rather than building or licensing domestic AI tools, the regulator is outsourcing core intelligence processing to a US company known for its work with government agencies and defense contractors. The three-month trial will determine whether Palantir’s platform can effectively identify patterns in fraud, money laundering, and other financial crimes at scale.

This arrangement is not a permanent partnership but an evaluation period. Palantir will process huge amounts of the FCA’s data to demonstrate whether it delivers value and reliability. However, the trial itself raises an immediate tension: to prove its worth, Palantir must access sensitive financial regulation data that could reveal vulnerabilities in the UK’s financial system, individual bank transaction patterns, and regulatory enforcement strategies. Once shared, that data cannot be unshared.

Why Privacy Concerns Are Mounting Over Financial Regulation Data Privacy

The privacy objections are not abstract. Palantir is a US company, which means its data handling practices are subject to US law, including potential government demands for access under surveillance statutes like the Foreign Intelligence Surveillance Act. The FCA’s data lake contains information about UK banks, financial institutions, and individuals caught in regulatory investigations. If that intelligence is stored or processed on US servers or accessible to US authorities, it falls outside the direct control of UK data protection frameworks.

Financial regulation data privacy concerns deepen when you consider what regulatory data actually contains. Transaction patterns can reveal business strategies. Enforcement case details expose regulatory gaps. Customer information in financial crime investigations, even anonymized, can be re-identified through cross-referencing. Palantir’s business model centers on data integration and pattern matching—connecting disparate datasets to find hidden relationships. That same capability that makes it valuable for crime detection also makes it powerful for surveillance, if misused or if access is granted under duress to foreign governments.

The “very significant privacy concerns” flagged in the source reflect a broader anxiety about data sovereignty. UK regulators are handing intelligence to a foreign company in an era of heightened geopolitical tension and competing claims over data governance. Unlike a UK-based vendor, Palantir’s decisions about data retention, access logs, and security protocols are not directly answerable to British oversight bodies.

Financial Regulation Data Privacy in the Broader Tech Governance Picture

This trial arrives at a moment when governments worldwide are reassessing how much sensitive data they should entrust to US tech firms. The European Union has tightened data transfer rules. Australia has scrutinized foreign AI involvement in government contracts. The UK, post-Brexit, is still defining its own data sovereignty framework. Handing the FCA’s financial intelligence to Palantir tests whether Britain is willing to prioritize security and crime-fighting efficiency over data control—and whether that trade-off is worth the privacy risk.

The three-month window is both a safeguard and a vulnerability. Short-term trials allow regulators to assess risk before full deployment, but they also create pressure to show results quickly. If Palantir demonstrates early wins in fraud detection, the FCA may feel compelled to expand the arrangement and deepen data sharing, even if privacy questions remain unresolved. Conversely, if the trial is cancelled due to privacy pushback, it signals that the UK is unwilling to cede control of financial intelligence to foreign AI vendors—a position that may constrain future partnerships with US tech companies in other sensitive sectors.

Is the FCA-Palantir arrangement permanent?

No. The FCA is running a three-month trial to evaluate whether Palantir is the right long-term partner. The trial is designed to test the platform’s effectiveness on financial crime detection before any permanent contract is negotiated. However, the trial itself involves sharing sensitive data, which creates immediate privacy exposure.

What types of financial crimes is Palantir being asked to detect?

Palantir’s platform will focus on fraud and money laundering detection, processing the FCA’s regulatory intelligence to identify patterns and suspicious activity. The AI system is intended to help regulators spot financial crimes at scale across the UK’s banking and financial services sector.

Why are privacy advocates concerned about US companies accessing UK financial data?

Palantir is a US-based company, meaning its data handling is subject to US law and potentially accessible to US government authorities under surveillance statutes. This places sensitive UK financial regulation data outside direct British regulatory control, raising concerns about data sovereignty and the security of national financial intelligence.

The FCA’s decision to trial Palantir on its financial regulation data privacy framework reflects a calculated risk: the potential security gains from advanced AI crime detection against the loss of exclusive control over Britain’s financial intelligence. Whether that gamble pays off depends not just on Palantir’s technical performance, but on whether the privacy trade-offs can be managed and whether UK oversight mechanisms prove robust enough to protect sensitive data in foreign hands. For now, the three-month window will test both the technology and the politics of outsourcing financial crime detection to a US vendor.

This article was written with AI assistance and editorially reviewed.

Source: TechRadar